Two of the most vocal critics of Tesla Inc.’s Autopilot feature renewed their calls for federal and state investigations of the EV maker, claiming the company’is engaging in “dangerously misleading and deceptive practices.”

Consumer Watchdog and the Center for Auto Safety once again accused the California-based electric vehicle maker and CEO Elon Musk of putting lives in peril by making claims that the Autopilot technology available on all three models Tesla offers is a fully functional autonomous technology.

The two groups have renewed their efforts in the wake of the investigations of two Florida deaths earlier this year that involved drivers using Tesla’s Autopilot at the time their vehicle’s crashed.

Musk has repeatedly offered that the company makes it clear that Autopilot is not currently considered fully autonomous; however, he has said that he expects that it will be by the end of this year. In the company’s most recent conference call, he noted that most Tesla models on the road are capable of being fully autonomous.

(Tesla Takes Another Slap as Consumer Reports Faults Latest Autopilot Update)

However, the two groups have called for the Federal Trade Commission and the California Department of Motor Vehicles to begin investigations immediately. They contend that Tesla violated Section 5 of the FTC Act, as well as California law, because the EV maker’s descriptions and assertions about Autopilot are “materially deceptive and are likely to mislead consumers into reasonably believing that their vehicles have self-driving or autonomous capabilities.”

The drum banging comes just as Tesla’s stock cratered the day after its second-quarter earnings call Tuesday. He made plenty of claims about the company that concerned analysts, most them about the company’s profitability going forward. However, he did note that almost all Tesla’s have the hardware necessary to be self-driving and it’s the software that is currently keeping the system from being autonomous.

He said that he expected the take rate on that software to increase over time and ultimately, “it will be quite compelling.”

“We expect to offer full self driving everywhere except the EU. We need to work through regulatory committees to get approvals. It will just take a little more time,” he said. “It’s just a temporary thing, and its specific to the EU. We were not present when the rules were drafted. They don’t make a lot of sense, but we still have to (work with them).”

The two safety advocacy groups believe that messages like that embolden users of the system to do things they shouldn’t, such as sleep behind the wheel, or the system simply fails to respond appropriately to situations and the results can be catastrophic.

(Safety Groups Want Tesla Autopilot Name Banned)

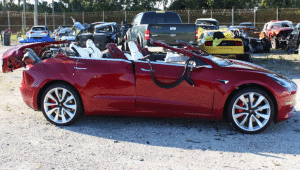

In February, a Model S veered across three lanes of traffic and slammed into a tree in Davie, Florida, and on March 1, a Model 3 drove into the side of a semi-truck’s trailer, shearing off the car’s roof. Autopilot was in use both times and both drivers died. There have been several other crashes, including some deaths, during the past few years where Autopilot played a role.

“It is time for regulators to step in and put a stop to Tesla’s ongoing autopilot deception,” said Adam Scow, Senior Advocate for Consumer Watchdog. “Tesla has irresponsibly marketed its technology as safety enhancing, when instead it is killing people.”

Tesla officials, in particular company co-founder Musk, have consistently maintained that using Autopilot doesn’t mean that drivers no longer need to be engaged in driving. The vehicle requires the driver to put their hands on the wheel periodically.

They’ve also regularly noted that Autopilot is an evolving technology, using the data from an ever-growing pool of drivers to help the technology to “learn” how to handle different situations while on the road.

“The massive amount of real-world data gathered from our cars’ eight cameras, 12 ultrasonic sensors, and forward-facing radar, coupled with billions of miles of inputs from real drivers, helps us better understand the patterns to watch out for in the moments before a crash,” the company noted in a statement in May.

“As our quarterly safety reports have shown, drivers using Autopilot register fewer accidents per mile than those driving without it.”

The statement was part of a larger piece announcing an update to Autopilot. The system now has Lane Departure Avoidance and Emergency Lane Departure Avoidance to help drivers stay engaged and in their lane in order to avoid collisions.

(Tesla Stock Tumbles on Word of Latest Loss)

Center for Auto Safety Executive Director Jason Levine noted the group asked for the FTC to ban Tesla from using the term Autopilot, which didn’t occur. “One year later, there has been more unnecessary, preventable tragedy, and more intentional deception by Tesla, including claims of ‘full self-driving capability.’ If the FTC, and the states, do not stop these unlawful representations, the consequences will squarely fall on their shoulders.”