Hungry? Tired? Frustrated? Toyota plans to reveal a concept vehicle that will be able to not only tell if you are too tired to drive but also read more subtle emotions and adjust the way it drives – or even take over driving duties entirely. It will also be able to converse with a motorist using artificial intelligence.

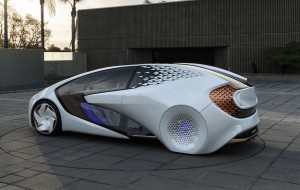

The Japanese giant gave a hint of what it has in mind at last January’s Consumer Electronics Show, or CES, in Las Vegas. A more advanced version of the Concept-I prototype will make its debut at the upcoming Tokyo Motor Show, and Toyota said Monday that it plans to begin testing autonomous electric vehicles by 2020 that will share many of the features found in the concept car.

“By using AI technology, we want to expand and enhance the driving experience, making cars an object of affection again,” said Makoto Okabe, general manager of Toyota’s EV business planning division, during a preview in Tokyo.

Though it was one of the pioneers in battery-electric technology with its original Prius hybrid, Toyota has been slow to embrace more advanced battery systems. But that is expected to change during the next half decade. This summer, Toyota announced a partnership with smaller Japanese maker Mazda to produce a new line of long-range battery-electric vehicles at a plant they will set up in the U.S. The cars are expected to introduce a new type of battery technology called solid-state that promises longer range, lower costs and rapid charging.

Toyota was also slow to embrace autonomous and fully driverless technology, a move it set out to correct several years ago by launching the Toyota Research Institute – which also is working on AI and robotics technologies.

The Concept-I is meant to bring all those efforts together to “foster a warm and friendly user experience,” the company explained when the first prototype was revealed at the 2017 CES.

(Toyota give Century a new look for a new … century. For the story, Click Here.)

Today’s cars already have a significant amount of intelligence built in. The 2018 Mercedes-Benz S-Class, for example, constantly monitors hundreds of parameters, such as steering wheel motions, to tell if a driver is growing drowsy. Toyota’s luxury brand Lexus has similarly used a camera to monitor a driver’s eyes for telltale signs of drowsiness.

The next version of Concept-I, company officials explained, will not only look to see if the driver is sleepy, but test for other emotions, as well as finding out if they’re hungry. It will be able to do this in ways similar to how humans interact, registering facial expressions, hand and body movements and tone of voice.

Toyota has already shown the ability to interact with drivers through a pint-sized robot named Kirobo which can be plopped atop the instrument panel to act as a friendly companion. But Kirobo doesn’t do much else. In the case of Concept-i, the technology will be built right into the vehicle and could be used to take some direct actions. If necessary, the vehicle will assist a drowsy driver to make sure they stay safe, or even take over driving duties entirely. The car could trigger a scent that enhances awareness or schedule a coffee stop, and it might pull over for a meal if the driver is hungry.

The prototype is meant to be “more than a machine,” according to Toyota’s Okabe.

That fits the name of the prototype. While it might sound like the “i” in iPhone, it actually means “love,” or “ai” in Japanese, rather than “intelligent.”

(Toyota set to build first hybrid model in U.S. Click Here for the story.)

Artificial intelligence is expected to become commonplace in tomorrow’s automobiles, especially in the form of autonomous and driverless vehicles. Mercedes’ sibling Smart brand showed off an intelligent prototype, the Vision EQ Fortwo, at the Frankfurt Motor Show last month that can communicate with passengers as well as pedestrians and other drivers.

It is envisioned as the base of a ride-sharing service that would improve traffic flow and reduce highway accidents substantially. Some experts believe such shared vehicles will become the norm, rather than the exception, in decades to come.

Toyota’s two main Japanese rivals are working on their own smart cars, Nissan expecting to have its first fully autonomous vehicle in production by 2020. And Honda also brought to the 2017 CES a concept showing off potential uses of AI.

Dubbed NeuV – and pronounced noo-VEE, and short for New Urban Electric Vehicle – this autonomous two-seater is targeted for those living in dense urban environments who typically don’t drive great distances and likely leave their vehicles parked much of the day.

“We designed NeuV to become more valuable to the owner by optimizing and monetizing the vehicle’s down time” by serving in ride-sharing services when, say, the owner is at work, explained Mike Tsay, principal designer for Honda R&D Americas, where NeuV was developed.

(Toyota set to reveal electrification strategy at 2018 Detroit Auto Show. For the story, Click Here.)

NeuV would also be able to interact with drivers and passengers, as if there were a human onboard, reading expressions, sensing emotions and responding to questions.